Downstream bioprocessing accounts for somewhere between 60% and 80% of the total production cost of a biologic drug. That number gets quoted often. What gets quoted less often is why — and what actually happens when the number doesn’t need to be that high.

The equipment isn’t the problem. Chromatography resins, filtration systems, viral inactivation technology these have all advanced significantly over the past decade. Process intensification is real. Continuous bioprocessing is gaining ground. Single-use technologies have removed whole categories of cleaning validation burden.

The problem is the data layer underneath all of it.

At most biopharmaceutical manufacturing sites today, the information needed to run downstream operations at their actual potential — process parameters, quality results, inventory status, batch history — sits siloed across historians, LIMS, ERP systems, and electronic batch records that were never designed to work together. The people running downstream operations are the integration layer. Their judgment, their memory, and their spreadsheets are what hold the decision-making together.

That works until it doesn’t. And in biologics manufacturing, when it stops working, the consequences show up in yield, in batch release timelines, in deviation reports, and increasingly, in FDA inspection findings.

Key Takeaways

- Downstream bioprocessing drives 60–80% of biologic production costs it’s the highest-leverage area in your manufacturing operation.

- The bottleneck isn’t the equipment; it’s the fragmented data sitting siloed across historian, LIMS, ERP, and batch records.

- Integrated electronic batch documentation cuts release time by up to 40%.

- Over 80% of process deviations trace back to human error in manual data handling and not process failure.

- Unified manufacturing data turns inspection readiness from a sprint into a permanent state.

What Downstream Bioprocessing Actually Involves

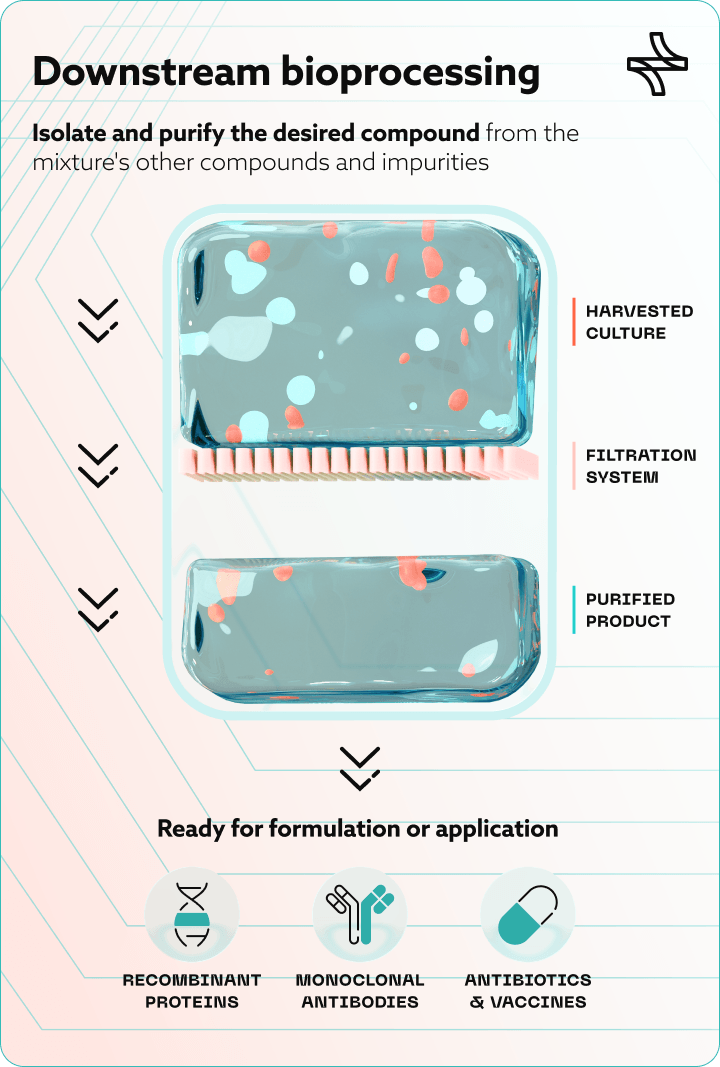

Downstream bioprocessing is the stage of biopharmaceutical manufacturing where the target product — a monoclonal antibody, a recombinant protein, an enzyme, a gene therapy vector — is separated from the complex biological mixture in which it was produced and refined to a purity and concentration suitable for patient use.

It follows upstream bioprocessing, where cells are cultivated in bioreactors under controlled conditions. Once that culture phase is complete, downstream bioprocessing takes over through six sequential unit operations:

- Cell harvesting and clarification. Separates the product from cells and debris via centrifugation or depth filtration. For perfusion-based upstream processes, this happens daily rather than at end-of-run — multiplying downstream complexity significantly.

- Capture chromatography. Isolates and concentrates the target molecule. For monoclonal antibodies, this is almost universally protein A affinity chromatography.

- Intermediate purification. Removes host cell proteins (HCP), residual DNA, and aggregates via ion exchange chromatography.

- Viral inactivation and viral filtration. ICH Q5A(R2) viral safety requirements mandate dedicated steps: low-pH hold for inactivation, nanofiltration for viral clearance.

- Polishing chromatography. Removes trace impurities to meet ICH Q6B-defined quality specificationsestablished in the regulatory filing.

- UF/DF and formulation. Concentrates and buffer-exchanges the purified molecule into its final drug substance form.

Each step generates data. Process parameters, in-process controls, hold times, column performance metrics, filter flux rates, assay results. The question is not whether that data exists. It is whether anyone can use it.

The Bottleneck Nobody Talks About at Review Meetings

Here is what the data on downstream bioprocessing performance actually shows.

Downstream processing costs account for 60–80% of total biologic drug substance production costs, with protein A resin and viral filtration among the largest single cost drivers. Process intensification approaches can theoretically reduce cost of goods relative to traditional fed-batch processes.

On the other hand operational overhead that sits between the equipment capability and the actual outcome. The industry signals the direction: nearly 40% of biomanufacturers are already evaluating continuous or perfusion-based upstream processes — which means downstream decision complexity is increasing, not stabilising.

Not equipment failures. Not process excursions. Data management. The downstream bioprocessing problem, for most established manufacturing sites, is not primarily a chromatography problem. It is a data infrastructure problem.

Where the Data Breaks Down in Downstream Operations

The fragmentation pattern is consistent across sites and has been for years. Four systems hold the data that should be driving downstream decisions and none of them were designed to work together for this purpose.

- Process data historian: Column performance, flow rates, UV absorbance, in-process pH and conductivity. High-resolution, high-volume, largely inaccessible without manual extraction.

- LIMS: In-process control results, release testing, HCP assays, viral clearance data. Designed to manage samples, not provide cross-campaign analytical context.

- ERP: Raw material lots, buffer batches, resin lifecycle tracking. Resin cycle counts are often managed in separate spreadsheets entirely.

- Electronic batch records: Process deviations, operator interventions, equipment notes. Structured and unstructured data rarely integrated with the layers above.

EU GMP Annex 11 and FDA 21 CFR Part 11 require that computerised systems used in GMP-regulated environments are validated, traceable, and auditable. In practice, the challenge is not individual system compliance it is the gap between systems, where data moves manually and traceability breaks down.

Connecting these four environments for a specific downstream decision requires a human being to serve as the integration layer. That human is usually a process engineer or MSAT analyst, spending days on work that should take hours.

What Changes When the Data Comes Together

When manufacturing data from historian, LIMS, ERP, and batch records is unified into a single governed, GxP-compliant environment structured for decision support, three things change across downstream operations.

Decisions become evidence-based rather than experience-dependent

Batch execution digitization and process data unification mean that pooling plans, process parameter adjustments, and resin reuse decisions can be driven by data from comparable historical runs rather than by the judgment of whoever happens to be available. The institutional knowledge becomes part of the system, not hostage to staff turnover.

Quality review becomes faster and more confident

When batch documentation is connected to process data and quality results in a single traceable environment, reviewers work from complete evidence rather than reconstructing the narrative from multiple systems. Batch release cycles shorten. The QA function spends less time chasing information and more time making assessments.

Inspection readiness becomes a continuous state, not a sprint

The peer-reviewed analysis of 1,766 FDA warning letters confirmed that data integrity violations remained elevated across both pre- and post-pandemic inspection periods. Audit-ready documentation and governed traceability — not last-minute remediation — is what separates sites that handle inspections with confidence from those that don’t.

What This Means Depending on Your Role

|

Role |

What This Means for You |

|

VP / Head of Manufacturing |

Pooling decisions, campaign planning, and deviation investigations that drag are schedule and margin losses. Data unification is a throughput lever, not an IT project. |

|

VP of Quality / Head of QA |

Most quality risk is invisible until it surfaces in a deviation or inspection. A unified data environment lets you see the exposure before an inspector does — and address it on your terms. |

|

QA / Validation Manager |

Automated traceability matrix generation, GAMP 5 and FDA Computer Software Assurance (CSA) risk-based validation support, and continuously audit-ready documentation packages change what your team can accomplish per quarter. |

|

MSAT / Process Development |

Connecting upstream process history to downstream yield outcomes enables predictive modeling, faster comparability studies, and more defensible post-approval change management. |

|

Manufacturing IT / Digital |

The platform must interface with existing historians, SCADA, MES, LIMS, and ERP without a wholesale infrastructure replacement, while satisfying 21 CFR Part 11 and Annex 11 requirements. |

The Infrastructure That Downstream Bioprocessing Has Always Needed

The downstream bioprocessing steps themselves — clarification, capture chromatography, viral inactivation, polishing, UF/DF, formulation are well-understood. The equipment performs. The science is solid.

What has lagged behind is the digital infrastructure that connects the data those steps generate into a unified, governed, decision-ready environment.

Sites that build that infrastructure — integrating historian, LIMS, ERP, and electronic batch records into a single GxP-compliant platform, applying process data analytics and predictive yield management to downstream operations, and maintaining cross-site compliance monitoring across every campaign — are not just reducing cost. They are reducing risk. They are releasing batches faster. They are building the kind of operational transparency that makes regulatory interactions less adversarial and quality reviews less burdensome.

That is what downstream bioprocessing data unification actually delivers. Not a marginal improvement in chromatography yield. A structural change in how well the entire operation performs — and how confidently it can demonstrate that performance when it matters most.

Frequently Asked Questions (FAQ)

What are the main steps in downstream bioprocessing for biopharmaceutical manufacturing?

Downstream bioprocessing consists of six sequential unit operations: cell harvesting and clarification, capture chromatography (typically protein A affinity for mAbs), intermediate purification via ion exchange chromatography, viral inactivation and viral filtration (low-pH hold and nanofiltration per FDA/EMA requirements), polishing chromatography, and ultrafiltration/diafiltration followed by drug substance formulation.

Why does downstream bioprocessing account for such a large share of biologic production costs?

Downstream bioprocessing drives 60–80% of total biologic drug substance costs primarily due to the high unit cost of protein A chromatography resin, the labour and validation intensity of viral clearance steps, and — critically — the operational overhead of managing disconnected data systems across each unit operation. Process intensification and data-driven decision support are the two levers most likely to structurally reduce that cost base.

How does continuous perfusion upstream processing affect downstream bioprocessing complexity?

Continuous perfusion generates daily harvest bags rather than a single end-of-run bulk harvest, meaning downstream teams must manage an inventory of individually distinct material units — each with a unique upstream process signature affecting downstream yield and quality. Without predictive pooling tools and unified process data connecting historian, LIMS, and ERP, this creates a combinatorial planning challenge that cannot be reliably solved through manual analysis alone.

What GxP compliance requirements apply to data systems used in downstream bioprocessing?

Data systems in GxP-regulated downstream environments must satisfy FDA 21 CFR Part 11 for electronic records and signatures, EU GMP Annex 11 for computerised systems, and GAMP 5 / Computer Software Assurance (CSA) risk-based validation requirements. Any platform unifying historian, LIMS, ERP, and batch record data must be validated, auditable, traceable to the individual record level, and capable of producing inspection-ready documentation without manual reconstruction.

What is the most effective way to reduce batch release cycle time in downstream biomanufacturing?

The highest-impact interventions are: replacing paper-based records with integrated electronic batch execution systems (shown to cut release time by up to 40% and reduce audit findings by 60–75%), unifying process data from historian, LIMS, and ERP into a single governed environment so reviewers work from complete evidence, and implementing exception-based review workflows that surface only the records requiring human judgment rather than requiring line-by-line review of an entire multi-hundred-page batch package.