The past few years have seen rapid advancements in the integration of Artificial Intelligence (AI) into various aspects of daily life. AI is now present in applications that diagnose medical conditions and devices that operate independently without human involvement.

AI has the potential to revolutionize industries, particularly healthcare, by performing tasks with greater accuracy and efficiency than humans.

This article examines the diverse applications of AI, its practical benefits, and the regulatory landscape associated with its use.

Introduction to AI in healthcare: facts and stats

The widespread adoption of AI has significantly transformed society, especially in healthcare. Experts estimate that the global AI healthcare market will grow from over $11 billion in 2021 to more than $188 billion by 2030. Within a decade, AI is expected to become an integral part of the entire healthcare industry. [1]

AI is defined as the science of creating intelligent systems, including computers and software programs. These systems employ advanced models and techniques to process large volumes of raw data and generate actionable insights.

Contemporary AI works through different methods of learning:

- Machine learning in diagnosis: AI models can be trained on large datasets of medical images and patient data to assist in disease detection and diagnosis. For example, AI algorithms have shown promising results in identifying breast cancer in mammograms with accuracy comparable to expert radiologists.

- Deep learning: A subtype of machine learning that analyzes larger amounts of data and creates more algorithms to simulate neural networks, capable of more complex tasks. AI can analyse complex biological data through deep learning models fostering many types of research. A 2023 study reported that AI-powered drug discovery could reduce drug development costs by up to 50%. [2]

- Neural language processing: A method where the main goal is to interpret human language, be it verbal or written. The process is used in the interpretation of documentation, notes, research, and reports. Scientific findings indicate that an NLP-based algorithm (KD-NLP) identified at-risk KD patients with a sensitivity of 93.6% and specificity of 77.5% compared to manual clinician note reviews. [3]

Through all those methods of learning, AI is thought to perform many different tasks in many different fields. In the field of medicine, healthcare providers and researchers have already started to apply those abilities to good use.

Key uses of AI in healthcare

The applications of AI in healthcare are many. It has been used in medical devices, diagnostics, imaging, and even in the generation of treatment plans.

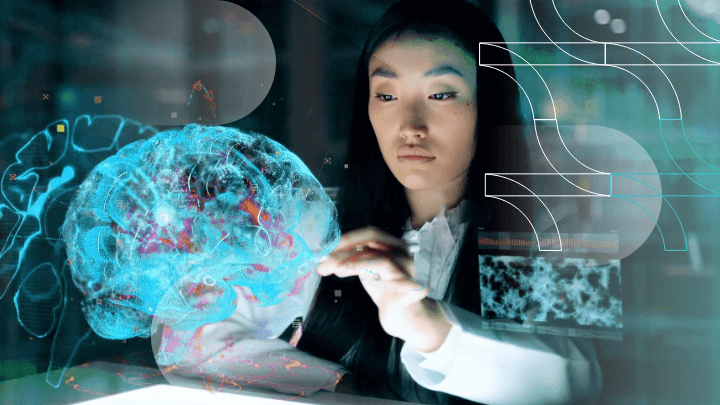

AI technology in healthcare has been the catalyst of the transformation of the whole medical system. Through the analysis of vast amounts of clinical data like electronic health records or medical images, AI systems can provide a wide array of new healthcare services.

Such services include (but are not limited to) diagnosis and disease prevention, prevention of human error and supporting healthcare professionals in making better-informed decisions.

Diagnostic Applications

AI can help health care providers find patterns in patient data that humans might miss. As a result, diagnoses are expected to be quicker and more accurate. This helps medical professionals detect serious illnesses like breast cancer and heart attacks early, and offer personalized treatments and precision medicine to patients.

Diagnostic AI models can do the following:

- Analyze medical images (e.g., X-rays, MRIs, CT scans) to detect anomalies;

- Process patient data to predict risk factors for certain diseases;

- Interpret genetic information to identify potential hereditary conditions;

- Analyze lab results to flag abnormalities or suggest additional tests;

AI reduces the workload for medical staff by automating some diagnostics, so they can focus more on patient care and complex cases. Ultimately this leads to improved efficiency in healthcare delivery, reduced healthcare costs, and better resource utilization.

But there are additional difficulties and things to think about when integrating AI in diagnostics. It is important to ensure AI algorithms are accurate and reliable, as biases or errors in the data could harm patients.

Treatment Personalization

AI applications in healthcare changed the game for treatment personalization as well.

AI can analyze large amounts of patient data, such as medical records and genetic information, to offer personalized treatment solutions. With AI in healthcare, doctors can create treatment plans tailored to each patient’s unique needs, instead of using a one-size-fits-all approach.

This treatment personalization is also changing the field of cancer care. AI can assist in determining the most effective therapies for certain cancer types using healthcare data from clinical settings, medical imaging, and patient visits.

For example, medical practitioners can forecast a patient’s reaction to a certain medication. This forecast is based on the patient’s genetic makeup, way of life, and clinical context. This allows doctors to optimize treatment outcomes and lower the risk of unfavourable side effects, thereby improving patient safety.

Operational Efficiency

AI has also significantly improved clinical workflows and operational efficiency across the healthcare sector.

AI technologies are being readily integrated into electronic health record systems. This allows quality care practitioners to optimize administrative tasks while focusing on quality patient care.

AI can automate time-consuming operations like patient visit scheduling, medical record management, and clinical data processing. Doctors do this by utilizing natural language processing and machine learning techniques. In addition to decreasing the strain on healthcare personnel, human error in clinical settings is also reduced. As a result, patient safety is improved and the diagnosis is more accurate.

AI applications in healthcare are also shown to be very helpful in analyzing large amounts of health data to find patterns and trends. This data helps medical professionals to make more educated decisions and more accurate diagnoses.

The potential to improve health outcomes and change healthcare systems becomes more apparent due to AI’s development. This suggests that healthcare delivery will become more accurate, patient-centered, and efficient in the future.

Predictive Analytics

AI’s influence on healthcare extends far beyond operational gains, with predictive analytics emerging as a game-changing application in the healthcare industry.

Deep learning and advanced algorithms can be used to anticipate probable health risks and consequences. Such knowledge allows healthcare workers to transition from reactive to proactive treatment approaches.

In this way, illnesses can be prevented prior to their emergence. Thus, AI could pinpoint individuals at high risk of having a heart attack or make a cancer diagnosis in its early stages. This is important because it allows for quicker interventions and improved health outcomes.

In the context of population health, AI-powered prediction models help to better anticipate disease outbreaks and manage public health emergencies.

In addition, artificial intelligence tools are changing the drug development process and thus the drug discovery process. This is done by accelerating the discovery of potential therapeutic targets and the completion of clinical trials.

The future of healthcare offers more individualized therapies, improved disease preventive tactics, and ultimately a major improvement in the standard of the patient. Overall health equity is bound to improve as more and more medical facilities integrate these functions.

Notable Examples of AI in Action

The application of AI has resulted in amazing advancements in the healthcare field.

A number of notable instances highlight the technology’s transformative potential:

- Identifying cancer: Google Health has developed sophisticated algorithms that can identify lung cancer from CT scans with greater precision than radiologists, potentially saving countless lives via an early diagnosis.

- Scan accuracy: In Google, machine learning algorithms are being utilized in the field of medical imaging to evaluate MRIs, X-rays, and other medical images with never-before-seen accuracy.

- Drug research. AI is expediting the medicine development process by predicting drug-target interactions and improving molecular structures. Some companies have made significant advances in protein folding prediction, something which could alter medicine design and our knowledge of diseases at the molecular level. This research in the EDN does improve patient outcomes for the drug targets exponentially.

- Healthcare chatbots and virtual assistants: They provide 24/7 assistance, enabling better access to medical information, and relieving healthcare staff of additional manual labor.

The list can go on, as in the last few years AI applications have seen a significant boom. The new developments lead to a constant supply of new successful case studies of AI applications. These successes show that AI has the capacity to bring a lot of positive developments in healthcare as a whole.

Benefits of AI for Patients, Providers, and The Healthcare System

AI in medicine and healthcare brings about a new era of improved medical practices and improved patient outcomes by providing large benefits to both patients and healthcare workers.

As was previously said, AI technology offers more personalized treatments based on each patient’s unique genetic profile and medical history, which might lead to more effective therapies and fewer side effects.

In radiology, AI-enhanced image analysis detects subtle abnormalities that human eyes might miss. This further helps make diagnosis more accurate. In addition, healthcare applications of AI might improve patient safety by lowering prescription mistakes and recognizing potential interactions between drugs.

Beyond personalized treatments and early detection, AI is now powering robotics and robotic surgery assistants. They can help medical professionals.be more precise during operations. A more precise operation means shorter recovery times for patients.

AI is also a valuable ally for medical professionals in clinical practice, complementing human intellect with data-driven insights. AI supplies healthcare professionals with the most recent findings and recommended procedures, as it can quickly evaluate enormous volumes of clinical and medical literature.

The future of AI in healthcare research and practices is sure to bring forth even more potential uses. The possibilities are limitless, yet a few questions remain.

Ethical Considerations

The big question in AI research is how ethical it is. Any AI development is burdened with answering concerns about the potential unethical use that this new technology could bring.

Data privacy is one of the primary concerns. Healthcare AI needs an extensive amount of patient data to function effectively. This makes AI a prime target for malicious attacks from hackers or other nefarious parties.

Given these concerns, the secure safekeeping and ethical handling of patient data becomes imperative. Healthcare centres ought to prevent the mishandling of protected health information in any way possible. Failing to do so may result in grave breaches of patient privacy, which erodes public confidence in healthcare institutions.

Another issue would be the potential for bias in AI systems. Any previous biases in data may be preserved because AI applications in healthcare utilize algorithms that have been trained on past clinical data. This may lead to unequal treatment among different patient populations.

AI systems may, for instance, be more successful in treating or detecting illnesses in some demographic groups while providing less precise or efficient care in others. The unfortunate result of bias would be a spike in health inequities.

The perpetual use of AI in healthcare raises concerns about the role of human intelligence in clinical decision-making. A risk exists that over-reliance on AI could lead to a diminished role for healthcare workers, potentially eroding the vital human element in healthcare.

Regulatory Landscape In Europe and the USA

The ethical concerns that AI brings can only be addressed through robust legislation. Some states and regulations have been proactive in doing just that.

Prime examples of model AI regulation is the European Union and the US are soon to follow their model. We shall explore their regulatory landscapes to learn from their success and failure.

AI Regulations in the European Union

The European Union is already handling the implications of AI and the potential issues that may arise from it. The way the situation is being handled is by applying pre-existing legal frameworks and creating new regulations like the proposed AI Act, for example.

The EU General Data Protection Regulation (GDPR) is a means through which Artificial Intelligence is partly regulated and monitored, but not completely. The regulations have to deal with personal data pertaining to patients who are being treated by medical professionals. When it comes to AI, however, in the health systems, it is more focused on innovation.

Healthcare innovation is usually conducted a lot more through clinical trials than directly into patient treatment. Although GDPR protects patient data, it does not fully cover the information of research participants.

What the proposed AI Act introduced was a risk-based way to handle the consequences of medical AI based on the key principles of ethical AI. GDPR and the AI Act have to be combined and synchronized so that all the goals each puts forward can be achieved together.

GDPR places restrictions on automated decision-making (ADM) and the processing of health data, except in cases where patient consent is obtained or for public interest purposes.

The proposed AI Act aims to provide a technology-neutral definition of AI systems within EU law and introduce a classification system that assigns different requirements and obligations based on a risk-based approach.

AI Regulations in the United States

The FDA has not taken the same steps as the European Union to set up comprehensive regulation for medical artificial intelligence, but The Center for Devices and Radiological Health (CDRH) of the FDA is therefore considering a complete product lifecycle-based regulatory framework.

Such a framework would allow for real-time modifications and adaptability for these medical devices without limiting their effectiveness or risking their safety.

The FDA usually chooses an appropriate premarket pathway through which they are able to safely analyze medical devices and review them. Such pathways include the De Novo classification, premarket clearance (510(k)), and premarket approval.

The FDA has the authority to evaluate and approve modifications to medical devices, including software as a medical device, if the modification carries a significant risk to patients. This means that any changes made to medical devices or their software that could potentially impact patient safety must undergo review and clearance by the FDA.

The traditional manner in which the FDA regulates medical devices is not meant for adaptive artificial intelligence and machine learning technologies. The need for full-proof medical devices that have been changed and improved through AI and ML may actually require more devices to go through the FDA’s premarket review.

How Do Leading Healthcare AI Firms Navigate Regulatory Approvals: Two Examples

Given how much Artificial Intelligence in healthcare has evolved and changed the whole industry, it is normal for different companies to pioneer innovation. There are many startups already working with AI to create revolutionary technologies for the healthcare world.

Arterys

Arterys, a pioneering healthcare company, achieved a significant milestone in 2017 by obtaining clearance from the FDA for using deep learning and cloud technologies in clinical services. Arterys sought to address the challenge of reading and analyzing the large output files produced by the 4D Flow MRI imaging technology. This technology put a focus on the simplification of the medical diagnosis of heart defects in newborns and children,

Recognizing the need for further automation, Arterys combined deep learning algorithms with cloud computing GPUs to develop a solution for automating the measurement of heart ventricles. By harnessing the power of artificial intelligence, the company eliminated the manual calculation process previously performed by providers.

Butterfly network

Ultrasounds play a crucial role in diagnosing various conditions, including blood clots, gallstones, and cancerous tumors. However, the high cost of advanced ultrasound machines, which can exceed $100,000, creates a significant barrier for underserved communities worldwide, limiting their access to medical imaging. [4]

To address this issue, Butterfly Network has developed an innovative solution by introducing the world’s first hand-held whole-body imager. These compact probes, designed to be attached to smartphones, enable imaging capabilities that can be transported to even the most remote locations.

The world of regulating personal data with respect to new technology can be difficult to understand purely because of the amount of information out there. Many different healthcare organizations and companies are interested in what the future holds, especially when it comes to Artificial intelligence and more so when it is involved in healthcare.

Thankfully, there are people in places governing such regulations who are taking the necessary precautions and steps to ensure the safety of such technology.

To Sum It Up

Artificial Intelligence (AI) is changing the healthcare industry and offers numerous benefits across various domains:

- In mental health, AI-powered tools assist in early detection and personalized treatment plans to enhance patient outcomes.

- For medical records management, AI streamlines the organization and retrieval of electronic medical records and boosts the efficiency of healthcare providers.

- In revenue cycle management, AI optimizes billing processes, reduces errors and expedites claims processing.

- Life science companies can now use AI for drug discovery and development and thus accelerate the introduction of new treatments.

- Healthcare technology advancements, such as AI-driven imaging data analysis, aid in disease diagnosis and treatment applications, particularly in cancer treatment.

These innovations lead to better clinical decision support and streamline clinical processes. Many healthcare organizations are integrating AI into daily clinical practice – by using traditional machine learning techniques to analyze radiology images and patient records, or integrate AI video analytics for hospital care.

These integrations support clinical documentation and decision-making, ultimately benefiting medical providers and healthcare executives.

However, the adoption of AI also raises concerns regarding data privacy and the need for robust regulations to ensure ethical use. As AI continues to evolve, its role in healthcare is expected to expand, offer new opportunities for improving patient care, and continuously boost operational efficiency.