Your downstream process is validated. It runs on schedule. The batch records look clean. And yet, at a commercial scale, the cost of goods keeps climbing. The process itself is the source, optimised for consistency but not for revealing what it quietly leaves behind on every batch.

This is the problem at the centre of large molecule manufacturing. The losses that matter most don’t appear as deviations. They don’t trigger alerts. They look exactly like normal operations because they are normal operations, running precisely the way the process was designed to run.

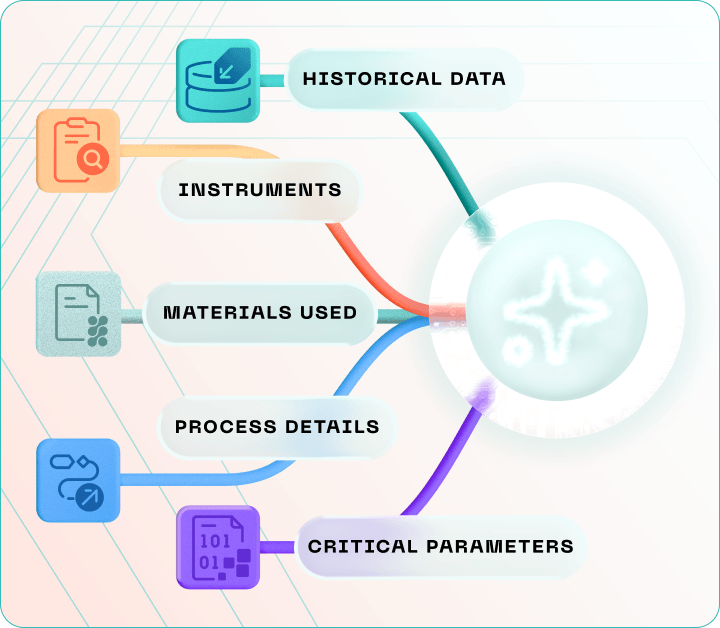

Finding them requires unified process data, fit-for-purpose analytics built for GxP environments, and the ability to look across unit operations simultaneously rather than one variable at a time.

5 Key Takeaways

- Validated, stable downstream processes can carry recurring product losses caused by design and timing inefficiencies not equipment failure or human error.

- Large molecule biologics are highly sensitive to process conditions, meaning small parameter interactions across unit operations accumulate into yield gaps that never appear as individual deviations.

- Downstream processing accounts for the majority of production costs in biologics manufacturing, making it the highest-leverage area for sustainable COGS reduction.

- Fragmented data across historians, LIMS, MES, and batch records is the primary reason most organisations cannot detect interaction-driven yield losses even when the data to find them already exists.

- Unified data, multidimensional analysis, and predictive optimisation working together convert process insights into structural, site-wide cost advantages without disrupting validated production.

What Large Molecules Are and Why They’re Sensitive to Process

Large molecule therapeutics biologics are complex drugs produced using living cells rather than chemical synthesis. Monoclonal antibodies, recombinant proteins, and cell and gene therapies are the most common examples. Unlike small molecule drugs, which follow predictable chemical reactions, biologics are grown: cells are cultured in bioreactors, the target molecule is expressed, then recovered and purified through a sequential downstream train.

This biological origin is what makes them uniquely valuable and uniquely vulnerable. Because large molecules are structurally complex, small changes in timing, flow rate, or operating parameters can affect both product quality and recoverable yield. Maintaining batch-to-batch consistency is, as documented in peer-reviewed literature, “nontrivial” a direct function of molecule complexity interacting with process complexity at every stage.1 It is also why AI and machine learning are increasingly applied to pharmaceutical manufacturing to make parameter interactions visible at a scale and speed that human analysis alone cannot reach.

What Downstream Processing Is and Why It’s the Cost Driver

Upstream manufacturing produces the molecule. Downstream processing recovers it.

After the bioreactor harvest, the product-containing fluid moves through a sequence of unit operations, each designed to bring the target molecule closer to final purity. The first step is primary clarification centrifugation and depth filtration to remove cells and debris. This is where permanent product losses begin; anything lost here cannot be recovered downstream. The clarified fluid then moves into capture chromatography, typically Protein A affinity chromatography for mAbs, which achieves the bulk of purification in a single highly selective step. Viral inactivation and polishing steps follow to remove residual process-related impurities and meet safety requirements. Finally, the product is concentrated, buffer-exchanged, and prepared for administration in formulation and fill/finish.

Each step consumes expensive materials, resin capacity, and labour time. Any molecule lost at any point has already absorbed the full upstream cost of the batch making downstream yield the most financially sensitive variable in large molecule manufacturing. This is why the digital transformation of pharma manufacturing increasingly focuses on downstream operations as its highest-return opportunity.

The Process in Practice: Where Yield Loss Hides

The three most consistently invisible sources of downstream yield loss share one characteristic; none of them appears in batch records as a problem.

|

Loss source |

Mechanism |

Why it stays invisible |

|

Hold-up volumes |

Product-containing fluid remains in tubing, vessel connections, and filter housings after each transfer step |

Losses are small per step only significant when viewed cumulatively across all unit operations and all annual batches |

|

Centrifugation parameters |

Flow rate, bowl speed, feed concentration, and discharge timing interact to affect recoverable product and shift subtly with upstream cell culture variation |

Validated specification ranges are broader than optimal operating windows; pass/fail tells you nothing about performance within the range2 |

|

Shift-to-shift variability |

Operators applying the same SOP with slightly different timing decisions create a measurable performance spread |

Invisible per batch; only significant as a pattern across many batches and shifts over time |

Why Standard Tools Were Never Built for This

Most biopharmaceutical manufacturers are trying to solve a multidimensional problem with univariate tools.

|

Approach |

What it examines |

What it misses |

|

Standard batch review |

One parameter at a time, against specification limits |

Parameter interactions across sequential unit operations |

|

Univariate PAT monitoring |

Individual sensor readings in isolation |

Patterns that only emerge when multiple variables are evaluated together |

|

Multidimensional analysis |

Multiple parameters simultaneously, across hundreds of batches |

Nothing this is precisely what reveals interaction-driven losses |

Research comparing univariate single-frequency analysis with multivariate PLS approaches for real-time bioprocess monitoring demonstrated that multivariate analysis produced significantly higher correlations with actual process behaviour an advantage that was “particularly evident during cell death, where tracking [cell density and viability] have historically presented the greatest challenge.”3

When the source of a yield loss is distributed across multiple unit operations elevated hold-up in one step, suboptimal centrifuge parameters in another, variability at the transition between them no single-parameter investigation finds it. The loss appears as baseline noise: consistently present, apparently unavoidable, invisible against the background of normal operations.

This is the analytical gap. But before it can be closed, there is a prior problem most organisations have not solved.

The Real Obstacle: Data Living in Five Systems That Don’t Talk to Each Other

In a typical biopharmaceutical manufacturing environment, process data is scattered. Historian data lives in one system. Batch records are in another. LIMS results are in a third. MES operations data is in a fourth. QA deviation and investigation reports are in a fifth. Each system was built to serve a specific operational function not to enable cross-system pattern recognition across hundreds of batches.

Pharma 4.0 frameworks exist specifically to address this shift from siloed departmental data to integrated, cross-functional operational intelligence. The yield loss is recorded somewhere across those systems. The parameter interactions that cause it are documented in fragments. Without unification, the connection between cause and consequence is invisible.

This is why fit-for-purpose manufacturing intelligence matters in a way that standard ERP or MES platforms do not. ERP systems were built for resource planning. MES systems for execution management. Neither was designed to correlate centrifuge parameters from the historian with cell lysis indicators from the LIMS with batch yield gaps from the batch record in real time, across hundreds of batches, in a GxP-compliant environment. That class of analysis requires a platform built specifically for pharmaceutical manufacturing: one that unifies data across all relevant sources, understands the regulatory context in which it was generated, and presents a coherent operational picture to the people who need to act on it.

What Changes When You Can See the Whole Picture

When process data is unified and the right analytical framework is applied, three capabilities become operational.

The first is cross-parameter pattern recognition across batches. Analysis spanning over 400 commercial manufacturing lots and screening 30 input factors identified just 3 critical parameters driving the majority of performance variation visible only through simultaneous cross-lot analysis. A hybrid AI/ML-mechanistic modelling approach achieved a 12% yield increase and a 33% reduction in high-molecular-weight impurities, verified at lab, pilot, and commercial scale. The study’s conclusion: traditional experimental designs are “resource-demanding and insufficient to capture interactions of multiple parameters.”4

The second is systematic root cause analysis. Detecting a yield gap is the first step; closing it permanently requires tracing that gap back to the specific parameter interactions, unit operations, and operating conditions generating it. GMP-compliant platforms built for root cause analysis and CAPA make this traceable, documentable, and permanently closeable rather than a recurring unexplained variance in batch records.

The third is predictive optimisation. Research demonstrating a digital twin of a continuous mAb purification process integrated with online PAT tools showed that model-based control could maintain greater than 85% process yield while absorbing feed variability demonstrating the technical feasibility of real-time CQA control without manual intervention.5The answer is earlier intervention, driven by data rather than deviation reports. An industry-wide survey involving experts from Merck, Bristol-Myers Squibb, GlaxoSmithKline, Pfizer, Boehringer Ingelheim, and BioPhorum rated integrated real-time monitoring tools as the highest business value across both upstream and downstream operations.6

The Financial Case for Getting This Right

Process understanding the same equipment, the same biology, the same validated process, looked at with greater analytical depth is where the financial gains live.

A Bristol-Myers Squibb case study comparing conventional mAb manufacturing at 1,000 L scale with an intensified process at 1,000 and 2,000 L reported a 6.7 to 10.1-fold reduction in consumables cost through redesigned chromatography steps, multi-column capture, and integrated polishing.7 Research from Sartorius and BiosanaPharma found that model-based optimisation of CHO perfusion culture through a dynamically optimised media exchange rate schedule delivered a 50% increase in volumetric productivity.8

The pattern across both results is consistent: the gains come from understanding the process more completely, and acting on that understanding systematically. This is the operational purpose of pharmaceutical data management and manufacturing intelligence platforms built for this environment.

What Fit-for-Purpose Manufacturing Intelligence Delivers

Closing the data and tooling gap requires capabilities that standard platforms were never designed to provide.

|

Capability |

What it enables |

|

Unified data layer |

Cross-system correlation of historian, LIMS, MES, batch records, and QA data — making interaction-driven losses visible for the first time |

|

Real-time intelligence |

Continuous monitoring across all data sources; performance patterns identified as they develop, not after the loss is locked in |

|

Predictive optimisation |

Models that anticipate yield-impacting conditions before they occur, supporting right-first-time production decisions |

|

Root cause analysis |

Systematic tracing of yield gaps and deviations to their process origins, with GxP-compliant CAPA documentation |

|

Regulatory readiness by design |

Audit-ready data environment built on GMP architecture — evidence of process control is continuous, not reconstructed for inspections |

|

Sustainability |

Reduced waste, lower buffer and resin consumption, more efficient resource utilisation as a direct output of yield recovery |

The manufacturing intelligence solution BGO Software has built for pharmaceutical clients connects the data sources that standard platforms treat as separate systems and makes the resulting cross-batch picture available in real time to the people running the process engineers, process scientists, and QA teams who currently have to pull data manually from four different places before they can begin to ask the question. Digital validation tools and GMP-by-design architecture ensure that audit-readiness is built into every batch, not assembled retrospectively.

Building a Structural Advantage, Not a One-Time Fix

The most durable value of data-driven yield optimization is not the initial gain, it is what becomes possible afterward.

When root causes have been traced, optimized windows defined, and real-time monitoring is in place, those insights transfer across shifts, across production lines, across facilities. A yield improvement at one site becomes the operating baseline everywhere that process runs.

A process improvement fixes what is currently visible. A manufacturing intelligence programme builds the infrastructure to catch what is not visible yet and to do it before the loss occurs, rather than after the batch is complete. A yield gap traced to a centrifuge load factor, recovered through a data-informed operating adjustment, and captured in a system that every operator on every shift can access that is what transforms a process improvement into a manufacturing asset. The Pharma 4.0 framework describes this shift precisely: from departmental silos to integrated, continuously optimising operations built on real-time data.

The compounding effect over batches, over sites, over years, is where the real financial case lives.

Sources:

Rathore A, Malani H. Expert Opinion on Biological Therapy. 2021;22(2):123–131. https://doi.org/10.1080/14712598.2021.1973425

Xu A, et al. Biotechnology Progress. 2025;42(1):e70084. https://doi.org/10.1002/btpr.70084

Lomont JP, Smith JP. International Journal of Pharmaceutics. 2023;649:123630. https://doi.org/10.1016/j.ijpharm.2023.123630

Wang R-Z, et al. mAbs. 2026;18(1):2644662. https://doi.org/10.1080/19420862.2026.2644662

Tiwari A, et al. Biotechnology and Bioengineering. 2022;120(3):748–766. https://doi.org/10.1002/bit.28307

Gillespie C, et al. Biotechnology and Bioengineering. 2021;119(2):423–434. https://doi.org/10.1002/bit.27990

Xu J, et al. mAbs. 2020;12(1):1770669. https://doi.org/10.1080/19420862.2020.1770669

Agarwal P, et al. Biotechnology Progress. 2024;41(1):e3503. https://doi.org/10.1002/btpr.3503

Frequently Asked Questions (FAQ)

What is yield optimization in biopharmaceutical manufacturing?

Yield optimization in biopharmaceutical manufacturing is the systematic identification and recovery of product loss across the downstream production workflow including harvesting, centrifugation, chromatography, and filtration. Advanced yield optimization combines unified process data with multidimensional analysis and predictive modelling to find losses invisible to traditional single-variable methods, particularly those caused by interactions between multiple process parameters across sequential unit operations.

What is downstream processing in large molecule manufacturing, and why does it drive COGS?

Downstream processing is the series of unit operations that recovers and purifies the target biologic molecule after bioreactor harvest. For monoclonal antibodies and other large molecule therapeutics, this typically includes primary clarification by centrifugation and depth filtration, Protein A affinity capture chromatography, viral inactivation, polishing steps, and final formulation. Downstream processing drives the majority of production costs in biologics manufacturing because each unit operation consumes expensive resins, buffers, single-use consumables, and labour and any product lost at these steps has already absorbed the full upstream manufacturing cost. For CDMOs and contract manufacturers, downstream yield directly determines batch profitability and client COGS commitments.

What causes batch-to-batch variability in biopharmaceutical manufacturing?

Batch-to-batch variability arises from the interaction of multiple critical process parameters (CPPs) including cell culture conditions, harvest timing, centrifugation settings, chromatography load factors, and buffer preparation none of which operate in isolation. Small deviations in upstream cell culture performance propagate into downstream processing, shifting feed conditions for clarification and capture steps. Shift-to-shift operating differences, equipment state variation, and hold-up volume inconsistencies compound across unit operations to produce a measurable yield and quality spread that no single-batch review will detect. Continuous process verification (CPV), enabled by unified real-time data, is the recognised ICH Q10 mechanism for identifying and closing this variability systematically.

Why does data fragmentation prevent effective yield optimisation and root cause analysis?

Data fragmentation prevents effective yield optimization because the parameter interactions that drive yield loss are distributed across multiple disconnected systems process historians, LIMS, MES, electronic batch records, and QA deviation management platforms. Without a unified data layer, it is operationally impossible to correlate centrifuge performance data from the historian with cell lysis markers from the LIMS and yield gaps from the batch record the combination that reveals the actual root cause. Root cause analysis in a GxP-compliant environment requires traceable, integrated data across all these sources, with audit trails that satisfy FDA 21 CFR Part 11 and EU Annex 11 requirements.

What is the role of process analytical technology (PAT) in yield optimization?

Process analytical technology (PAT) is the FDA-endorsed framework for using real-time measurement and analysis of critical quality attributes (CQAs) and CPPs to ensure process understanding and consistent product quality. In downstream bioprocessing, high-value PAT tools include inline variable path-length UV/VIS spectroscopy for concentration monitoring, online liquid chromatography for charge variant tracking, and multiangle light scattering (MALS) for product quality characterisation across purification steps. When integrated with a unified data platform, PAT tools shift quality control from end-of-batch testing to continuous, real-time process monitoring enabling deviation detection and correction before yield loss is locked in.

Can predictive optimisation be implemented in a GMP-validated environment without revalidation?

Yes. Predictive optimization operates on historical and real-time process data rather than through experimental interventions in validated production meaning it can identify improvement opportunities and simulate operating adjustments without triggering a revalidation cycle. This approach aligns with ICH Q8 (pharmaceutical development and design space), ICH Q9 (risk-based decision-making), and ICH Q10 (pharmaceutical quality systems). In practice, this means adjusting operating targets within an established design space using data-informed rationale, with all changes documented in a GxP-compliant audit trail. For CDMOs and integrated pharma manufacturers, this framework supports batch release confidence and audit-readiness simultaneously.

What is continuous process verification (CPV) and how does it reduce yield loss?

Continuous process verification (CPV) is the ongoing collection and statistical analysis of process and product data throughout commercial manufacturing to confirm that a process remains in a state of control. Defined under ICH Q10 and referenced in FDA guidance on process validation Stage 3, CPV replaces periodic retrospective batch review with real-time, forward-looking process monitoring. For downstream processing in large molecule manufacturing, CPV connects data from unit operations across the full train to detect performance drift before it becomes a yield loss event or a CAPA trigger. Fit-for-purpose manufacturing intelligence platforms that unify historians, LIMS, MES, and batch record data are the enabling infrastructure for CPV at commercial scale.