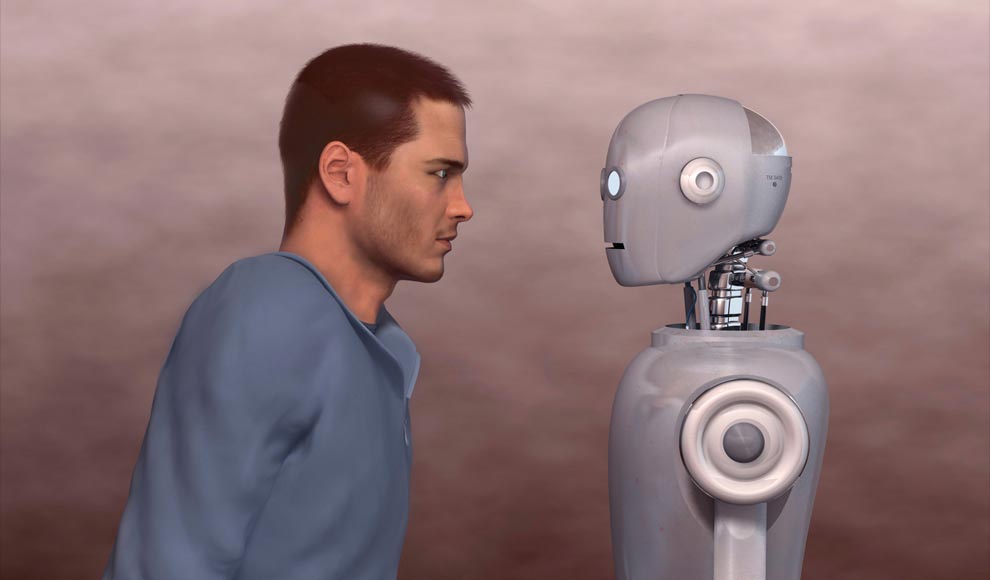

There is an invincible, indestructible, and co-dependent connection between humans and computers. And no. It is not wireless. The relationship represents a combination of organic thinking and machine calculation, one of which can hardly exist without the other. In many ways, this correlation shapes the present as we know it and promises the future we have all seen in expensive Hollywood productions. Quite often, it produces physically imagined forms of droids, robots, and cyborgs. Most importantly, however, this relationship is based on the differences and similarities between humans and computers and the ways in which what one of them lacks can be fulfilled by what the other one has.

What is the main connection between humans and computers?

Humans and computers inhabit one and the same world, in which they seem to exist in symbiosis. To present it in the most easy to comprehend way, computers need people in order to be created in the first place, while people need computers in order to evolve. The progress of media, banking, electricity, telecommunication, energy efficiency, railways, and other means of transportation depends on technology. The more advanced it is, the more advanced they are in return.

Just think of modern-day cars, which are basically computers on wheels. Without innovative software projects and dozens of sensitive components that function like artificial neurons through electrical impulses in a nervous system (entertainment systems, brakes, infotainment systems, cockpits, and so on), the automotive sector wouldn’t have been as successful as it is now. But computers are not integrated into the structure of vehicles alone. They are integrated into the very process of making a vehicle, too.

Car manufacturers have come a long way using high-tech tactics when building automobiles. And nowadays, there is a more effectively working generation of auto assembly lines that is composed of collaborative computerized robots that are part machines, part humans. Carmakers such as Kia, Ford, Haval, and BMW, for example, have all used and are using robotic machines for more cost-efficient and energy-efficient assembly lines.

But it does not end with the automotive industry alone. Other industries, including Pharma, largely rely on computers for tasks ranging from filing and keeping records to monitoring and service-offshoring. And if science wouldn’t have been the same without computers, what is left for the IT sector itself? Without computers, IT would have been non-existent; a program would have remained just a TV show; and human memory would have been something that one lost with age and a hard drive—a long trip on the road – unlike computer memory.

Similarities between Humans and Computers

It is true that computers become smarter and more sophisticated as time passes through machine learning. Characteristics that are very close to those of human beings are ascribed to machines, and this is perceived as a potential threat. Think of The Terminator. Think of Chappie. Think of The Matrix. Think of all of those movies that depict worlds dominated by computers and artificial intelligence. People are no longer saying, “Oh, computers are really stupid. They can’t really think. They cannot really understand. They cannot really read Shakespeare.”

Quite the contrary. Computers are clever, sometimes even cleverer than humans. The computer’s evolution never slows down and does not seem to be getting any closer to settling down. Even though they are not entirely operating on human levels, computers progressively improve their intellectual activities and capabilities. But what is the common thing between them and humans apart from the fact that both of them are most valuable when they are working and being productive?

Learning

Just like people, machines can learn too. They gain knowledge, and then they gain more knowledge on how to enhance what they already know. Some might argue that this is possible due to humans and the programming on computers, but think of students and their teachers. Isn’t this exactly what happens in the human-computer situation as well? One is the giver, who transmits information and knowledge to the receiver. The interaction between them and the outcome is what matters.

And what is the outcome—computers being able to absorb new information and reproduce it proactively thanks to algorithms such as “deep learning”. Watson and Deep Blue are typical examples of computers being able to learn and outsmart humans. The formal one represents a chess-playing computer created by IBM, an American technology and consulting corporation. On February 10, 1996, Deep Blue played against the famous world chess champion Garry Kasparov and defeated him. Watson, then, demonstrated another set of artificial skillfulness and depth in the Jeopardy! show. It competed against two of the best contestants, Brad Rutter and Ken Jennings, and managed to outplay them. Without human interference, of course.

Understanding

Machine-learning based systems also enable computers to show some sort of understanding. This learning is based on pure data and not on manually programmed codes. Just like humans, then, computers do not understand by what they are told but by what they see and observe on their own. Companies and websites such as Netflix, Amazon, and eBay take advantage of such machine-learning abilities in order to suggest products to their users based on their preferences and previous choices.

Another example is the Chinese website Baidu, which uses a leading edge image recognition system. The system can understand and search for pictures, for instance, that are similar in many ways (like position, facial expression, contours, shapes, color, etc.) to the picture one has uploaded before that. This proves that computers and computer-based systems can comprehend what they see, generate descriptions, and deliver results based on received data. It is not exactly human performance and understanding, but it has analogs.

Processing power and speed

Drawing parallels between human brains and computer databases brings up a variety of other similarities. Both of them have memory/storage capacity; both of them use electrical signals like nerve cells; both of them can retrieve and transmit data; both of them have partitions; and both of them connect data in order to reach logical and working conclusions. Being able to analyze and link scattered and proportionate data, computers, consequently, have the capabilities to create logical structures, allowing them to understand and learn.

Humans vs technology

There are also aspects of the world we live in where people and machines differ quite a bit.

Problem solving and decision making

Problem-solving and decision-making processes involve complex cognitive tasks that can be performed by both humans and computers. Here are some key considerations regarding the comparison between humans and computers in problem-solving and decision-making:

Human Advantages:

- Contextual understanding: Humans possess the ability to understand complex contexts, nuances, and emotional aspects that may be challenging for computers to grasp.

- Intuition and creativity: Humans can rely on intuition, creativity, and divergent thinking to generate innovative solutions or make decisions based on non-linear patterns and subjective factors.

- Ethics and moral judgment: Humans can navigate ethical dilemmas and make decisions based on moral principles, empathy, and social considerations that go beyond logical analysis.

Computer Advantages:

- Speed and accuracy: Computers can process large amounts of data quickly and perform calculations accurately, enabling faster analysis and decision-making in certain scenarios.

- Data-driven insights: Computers can analyze vast datasets, identify patterns, and generate data-driven insights that may not be immediately apparent to humans, supporting evidence-based decision-making.

- Consistency and objectivity: Computers can make decisions consistently and objectively, avoiding biases and subjective influences that may affect human decision-making.

- Automation and scalability: Computers can automate repetitive tasks and scale their capabilities, allowing for efficient handling of large volumes of data or complex calculations.

Creativity and innovation

Humans possess unique qualities that contribute to creativity and innovation, such as intuitive thinking, contextual understanding, non-linear thinking, and the ability to incorporate emotional and aesthetic considerations into their work. On the other hand, computers excel in data-driven insights, algorithmic creativity, rapid processing and simulation capabilities, as well as automation and optimization. While humans bring subjective and nuanced perspectives, computers offer objective analysis, efficient processing, and the ability to handle vast amounts of data. Both humans and computers have distinct strengths that contribute to creativity and innovation, and leveraging these strengths can lead to powerful outcomes in various domains.

Interaction and communication

Human interaction and communication involve the use of natural language, including complex sentences, emotional expression, and conveying abstract concepts. Non-verbal cues such as facial expressions, body language, and gestures enhance communication, while empathy and social dynamics allow for understanding, adapting to social cues, building relationships, and navigating social complexities. In contrast, computer interaction relies on programmed languages and structured protocols to transmit and receive data, with limited understanding of natural language and the inability to interpret non-verbal cues. Computers provide prompt and predictable responses based on pre-programmed instructions or algorithms, ensuring consistent and reliable interactions.

Potential for bias and error

Humans:

- Subjectivity and cognitive biases: Humans are susceptible to various biases and errors, including cognitive biases influenced by personal beliefs, experiences, and emotions. These biases can affect decision-making, judgment, and data interpretation.

- Limited capacity and fatigue: Humans have limitations in attention span, memory, and cognitive capacity, leading to potential errors due to information overload, multitasking, and fatigue.

- Unconscious biases: Humans can unknowingly harbor biases based on factors such as race, gender, or age, which can influence decision-making and actions.

Computers:

- Algorithmic biases: Computers can be subject to bias if the algorithms used in their programming are flawed or biased. Biases present in training data or algorithmic design can lead to discriminatory outcomes or skewed results.

- Lack of contextual understanding: Computers lack the contextual understanding that humans possess, which can lead to errors in interpreting complex or ambiguous situations.

- Dependency on data quality: Computers heavily rely on data inputs, and if the data is incomplete, inaccurate, or biased, it can result in flawed outputs and erroneous conclusions.

Impact on society and the workforce

In terms of impact on society and the workforce, humans bring unique skills such as creativity, critical thinking, and interpersonal interactions, which contribute to innovation and complex decision-making. On the other hand, computers offer automation, efficiency, scalability, and consistency in tasks. While automation may lead to job displacement, it also creates new opportunities and roles. Finding a balance between human capabilities and technological advancements is crucial for maximizing productivity and ensuring a smooth transition in the workforce.

Drawing assumptions from all this, people come to realize that the human-like capabilities of computers grow exponentially and at rates faster than those of any person. There is no doubt that machines follow commands, but it is the way in which they follow those commands that amazes us. What is more, computers succeed in exhibiting that they can function as separate agents without the direct presence of a computer programmer or engineer. They learn, comprehend, and respond in the most profound ways. They come up with images based on descriptions, produce answers based on questions, and evoke meaning based on understanding. And just like people, computers adapt and evolve over time. But is this enough to make them superior once and for all? Let’s find out in our Humans vs Computers series, Part II!